OpenRouter Compatible Chatbot for WordPress

If you run a WordPress or WooCommerce site, you usually pick one AI provider and stick with it. That works, until you want to test different models, improve answer quality, or control costs.

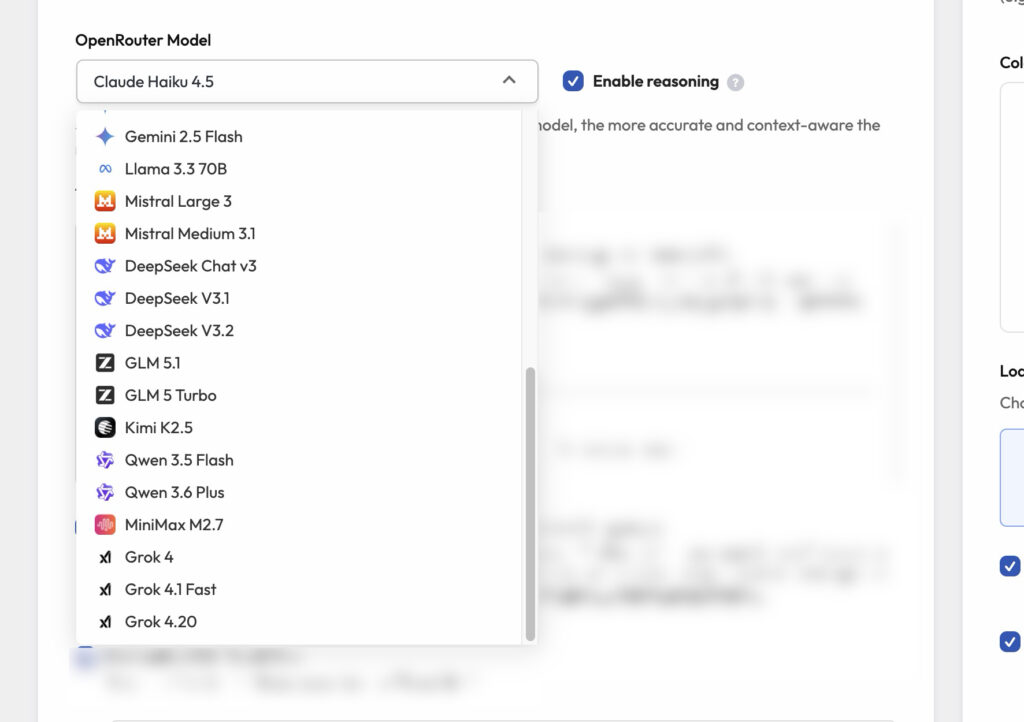

OpenRouter fixes that problem. It’s a single API that gives you access to many models through one integration. In practice, you can run your WordPress chatbot on GPT-style, Claude-style, and Gemini-style models (depending on what’s available), plus other popular model families you might see in the OpenRouter model list inside your plugin (for example GLM, DeepSeek, Kimi, Qwen, or Grok). The exact list and model names depend on the providers you enable and what OpenRouter exposes in your account.

Why use OpenRouter in a WordPress chatbot?

Because it lets you treat models like a setting, not a rewrite.

- One provider integration, many model choices.

- Fast testing: switch models in minutes, not days.

- Cost control: use cheaper models for simple questions, stronger ones for hard questions.

- Cleaner ops: one billing account, one key rotation process.

AI Chat & Search – chatbot that works with OpenRouter

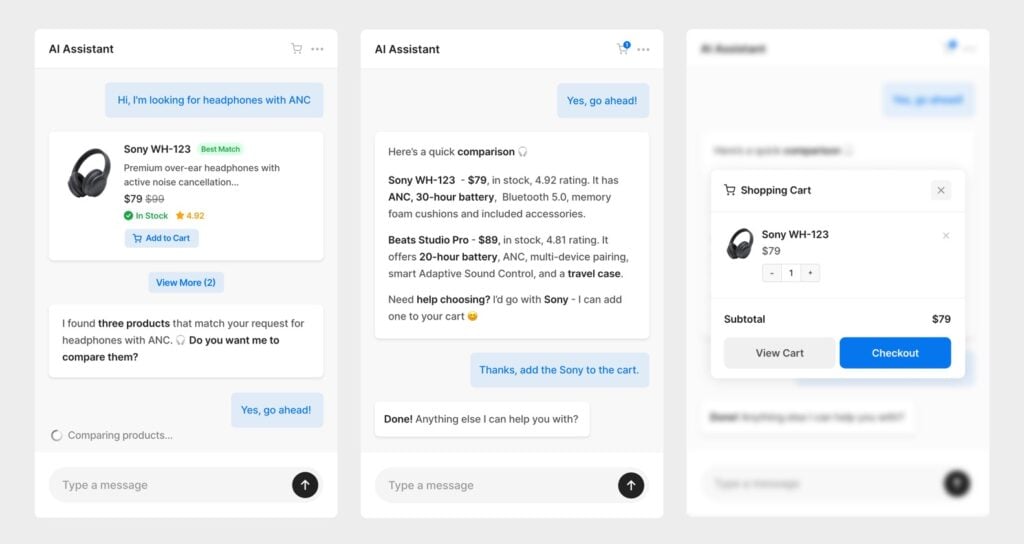

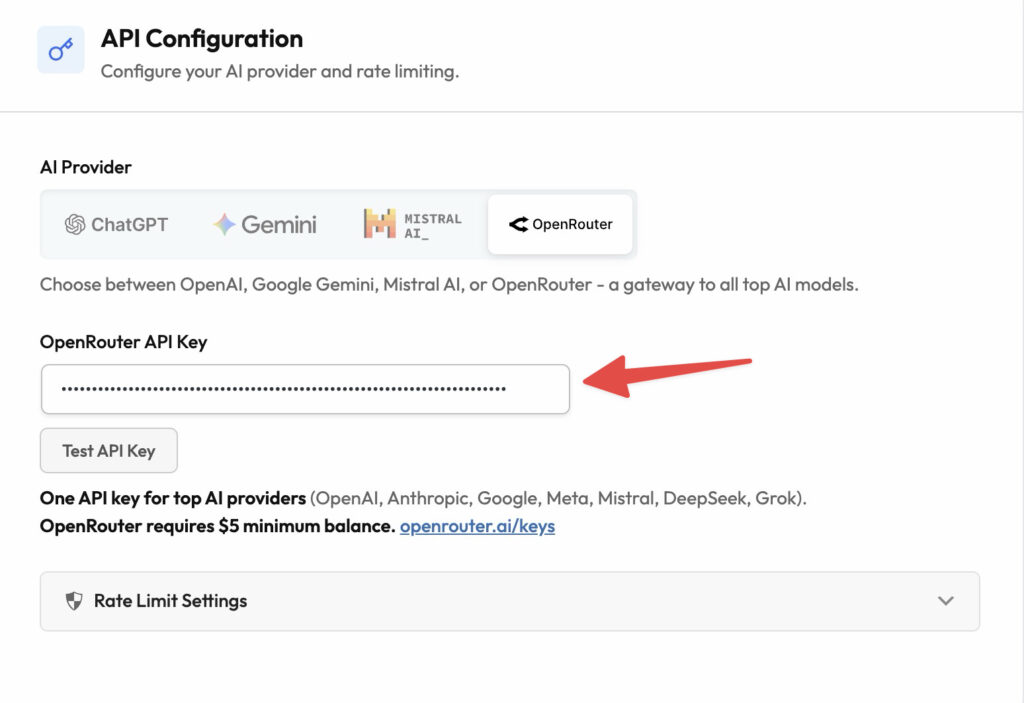

If you want a WordPress-native chatbot with knowledge-base grounding (RAG) and WooCommerce Q&A, AI Chat & Search Pro works with OpenRouter out of the box. You connect your OpenRouter API key in the plugin, then pick models from an “OpenRouter Models” group (the exact list depends on what OpenRouter exposes in your account).

- OpenRouter support: connect your OpenRouter API key and run the chatbot on OpenRouter models without rebuilding your setup.

- Favorite models: set and quickly select your preferred models (for example a fast default plus a stronger fallback, depending on provider availability and your OpenRouter setup).

- Free vs Pro: start with the Free version, then upgrade to Pro when you need the full feature set.

Learn more: AI Chat & Search Pro

Get the Free version: Download AI Chat & Search

See plans: AI Chat & Search Pro pricing

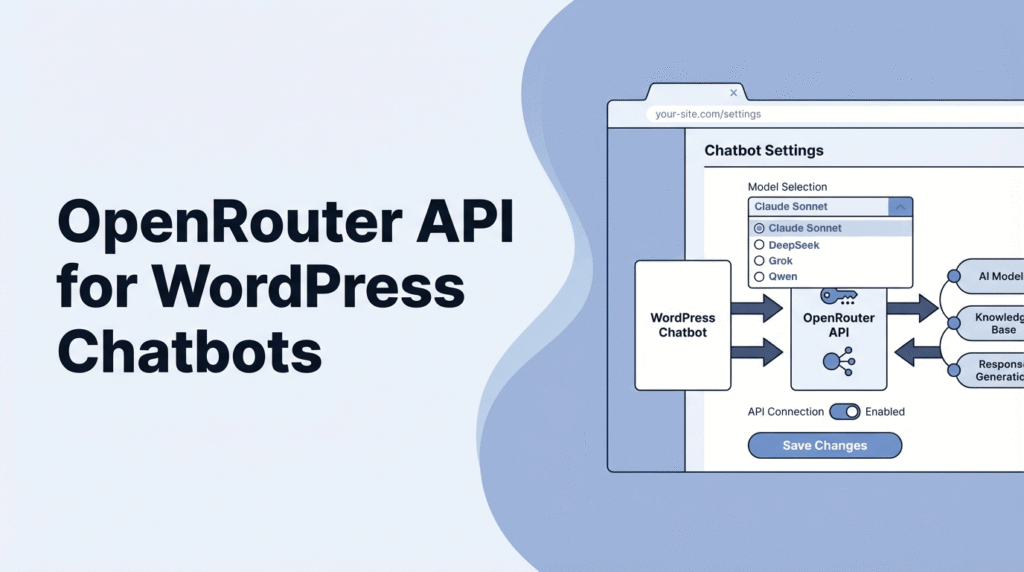

OpenRouter API: what it is

The OpenRouter API is a gateway. Your WordPress chatbot plugin sends chat requests to OpenRouter, and OpenRouter routes them to the model you selected.

For end users, the benefit is simple: you manage one OpenRouter account and one API key, then you can switch models as a setting (availability depends on your OpenRouter account and enabled providers).

OpenRouter API compatibility

Some AI tools and plugins are built around a familiar “chat API” pattern. OpenRouter follows that same idea, which means your chatbot plugin can treat OpenRouter as a drop-in model source instead of a custom integration.

In plain terms, compatibility reduces lock-in. You can keep your WordPress chatbot setup, knowledge base grounding, and WooCommerce logic the same, and only change the model you run on.

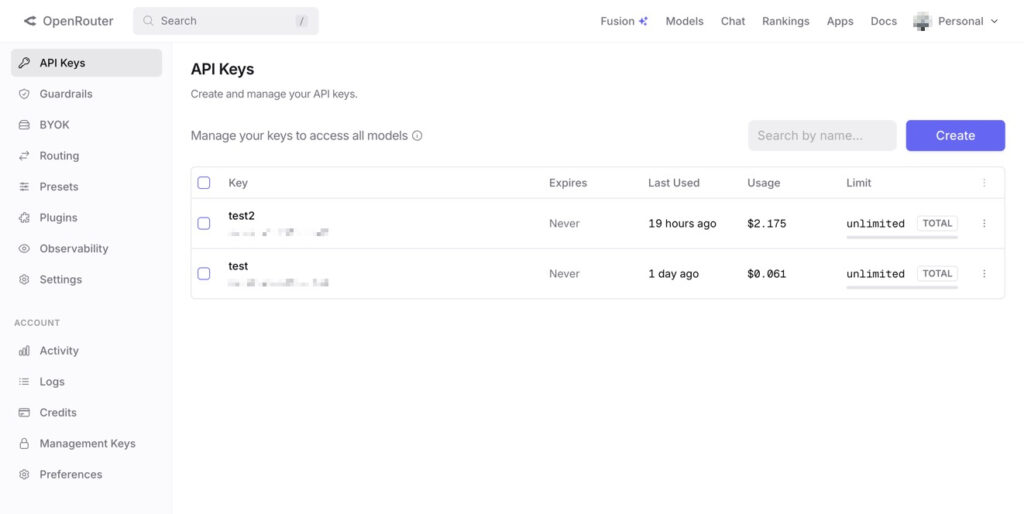

OpenRouter API key: how to get it

You’ll generate your OpenRouter API key in the OpenRouter dashboard. Keep it like a password. If it leaks, someone can spend your credits.

- Go to openrouter.ai and sign in.

- Open your account dashboard and find the API keys section.

- Create a new key, then copy it once and store it securely

Where to paste the key in WordPress

In AI Chat & Search Pro, you paste the key in the OpenRouter provider settings. Then save and run a quick test chat from the widget.

After that, you’ll usually want to enable grounding so the bot answers from your site content. If you want a step-by-step walkthrough, see how to train an AI chatbot on a WordPress knowledge base.

OpenRouter pricing: what you pay for (and what you don’t)

OpenRouter pricing is mostly usage-based, and it depends on the model you choose. That’s the tradeoff and the benefit at the same time.

For WordPress sites, the practical way to think about it is:

- Your WordPress plugin: one-time cost (if you pick a one-time plugin like AI Chat & Search Pro).

- Your model usage: pay per request and tokens, based on your chosen model in OpenRouter.

If you’re comparing pricing models in general, keep in mind that OpenRouter costs come from model usage (tokens), not from WordPress itself. Related: WordPress chatbots without monthly fees.

Practically, OpenRouter is a good fit when you want to test models quickly, balance cost vs quality, and avoid reconfiguring your WordPress chatbot every time you change providers.

OpenRouter free models: how to use them for testing

OpenRouter free models are great for initial setup and UI testing. Use them to confirm your WordPress plugin configuration works before you spend money on higher-end models.

A simple workflow:

- Connect OpenRouter in WordPress.

- Pick a free model for a first smoke test.

- Turn on grounding (RAG) and test questions that require your site content.

- Switch to your preferred paid model once everything behaves.

If you’re building a WooCommerce assistant, keep the model cost in mind and prioritize good grounding. Our WooCommerce AI chatbot guide goes deeper on the setup approach.

FAQ

Is the OpenRouter API free?

OpenRouter is not “free” in general. It’s a paid gateway with usage-based pricing. That said, OpenRouter also offers free models that are useful for testing your WordPress integration before you switch to paid models.

Do I need an OpenAI key to use the OpenRouter API?

No. If your WordPress plugin is connected to OpenRouter, you use your OpenRouter API key. You don’t need a separate OpenAI account just to run OpenRouter.

Which models should I start with for a WordPress chatbot?

Start with a fast model for setup and UI testing, then evaluate a stronger model for your real support questions. OpenRouter makes it easy to compare model families like GPT and Claude, and you may also see other options in your plugin’s OpenRouter model picker (for example GLM, DeepSeek, Kimi, Qwen, or Grok), depending on what OpenRouter exposes in your account and which providers are enabled.

Can I use OpenRouter with a WooCommerce chatbot?

Yes. OpenRouter is just the model gateway. The WooCommerce features come from your WordPress plugin, like product Q&A, recommendations, and grounding on your catalog. OpenRouter helps when you want to test different models without reworking your integration.